Blog

Introduction

PFRL has been under development as the successor of ChainerRL. We also continue the research of distributed reinforcement learning (RL) in PFN. In this blog, we introduce our design, practice, and results achieved in distributed RL via an example of a real-world application.

What is RL and why is distributed RL necessary?

RL is one of the components in the major machine learning techniques along with supervised learning and unsupervised learning. In RL, the model is updated by repeating the following steps: 1. agents first explore environments and generate experiences; 2. The RL model is trained with these experiences; 3. Agents explore the environments and generate new experiences with the updated model. Unlike supervised learning, RL does not require explicit labels on the target dataset and explores the search space dynamically. In other words, RL generates a dataset while training the model simultaneously.

When one applies machine learning algorithms to difficult or complex tasks, an effective option is to increase computing resources (i.e. scaling out) to accelerate the training process. Due to the nature of RL, there are two major ways to scale out the training:

The first one is to scale out the model training part. It is often the case that the target task is so difficult that long training time is required to achieve good accuracy. This is similar to other deep learning applications, such as image classification or segmentation for large datasets.

The second one is to scale out the environment. RL is often applied to complex real-world tasks, e.g. robot manipulation [6]. In such applications, the environments are often physics-based simulators, which are complex and computationally heavy. Even though we can accelerate the model training by using multiple GPUs, the environment may take longer to produce enough experience data for the training process, and become the bottleneck, therefore, limit the overall speedup. In such circumstances, the GPUs that are allocated for deep learning training are idle for a long time. GPUs are one of the most expensive components in computing systems. Since it is often the case that simulators are complex applications that only run on CPUs, one may want to increase the number of CPUs for simulators to feed the GPUs and take the best balance of computing resources.

History of developing and scaling out RL efforts in PFN

PFN has been tackling real-world robot manipulation tasks in both academic community and industrial applications. We published a paper “Distributed Reinforcement Learning of Targeted Grasping with Active Vision for Mobile Manipulators” [1] in IROS 2020, where we demonstrated scaling out the RL application up to 128 GPUs and 1024 CPU cores.

Another important contribution to the community by us is developing deep learning frameworks. PFN developed the Chainer family, Python-based flexible deep learning libraries based on the defined-by-run concept. The IROS 2020 paper [1] mentioned above were based on ChainerRL, an RL library in the Chainer family.

At the end of 2019, PFN decided to shut down the Chainer development and join the PyTorch community. The ChainerRL components were ported to PyTorch and it was named PFRL. The library is designed to enable reproducible research, to support a comprehensive set of algorithms and features, and to be modular and flexible.

For more details on PFRL, see our blog post: Introducing PFRL: A PyTorch-based Deep RL Library | by Prabhat Nagarajan | PyTorch

Design & Implementation

Motivations

Unlike the conventional machine learning, where all the data can be read from existing files, RL learns while generating the experience for the next learning epoch. Such a feature adds a new possible bottleneck to RL in addition to the learning process itself. Currently, we observe the following two big performance challenges in RL:

- Slow acting: For some heavy environments, generating experience, i.e. acting, can be quite slow, e.g. heavy physical simulations.

- Slow learning: The learning time increases as we have more experiences generated or have a bigger model.

While researchers focus more on the slow learning, and much work is published to resolve the slow learning by using multiple learners (e.g. DistributedDataParallel for PyTorch. On the other hand, a slow actor results in a bigger interval between each learning epoch and a longer overall learning time. Learners have to wait for enough experiences generated before they can start the next learning epoch. Moreover, since most actors run on CPU, the computational power divergence between CPU and GPU makes it more difficult for such heavy environments to catch up to the learning. Hence, in this experiment, we focus more on resolving the slow acting issue via actor parallelism.

Actor parallelism & Remote actor

Similar to distributing the learning workload to multiple GPUs, we tackle the slow acting challenge by adding more actors. In PFRL, actor parallelism can be achieved by using multiple local actors, which are located on the same node as the training processes, and connect them with pipes. However, to ensure a balanced training-acting ratio as we stated above, One may need more CPUs than those that are installed on one node, especially in case of heavy environment simulation. Moreover, the training processes also consume CPU power, while the number of PFRL’s local actors is limited by the number of CPUs on a single node.

Therefore, we extend PFRL’s actor system on top of gRPC library to enable communication over physical computing node boundaries (the gRPC-based actors are referred to as “remote actors” in this article). Remote actors perform two types of communication between learner processes via gRPC:

- Periodically receive an updated model from the learner

- Send the generated experiences to the learner

By having the remote actors, we are able to scale out the number of actors without the limitation of physical computing node resources.

Overview of PFN distributed RL

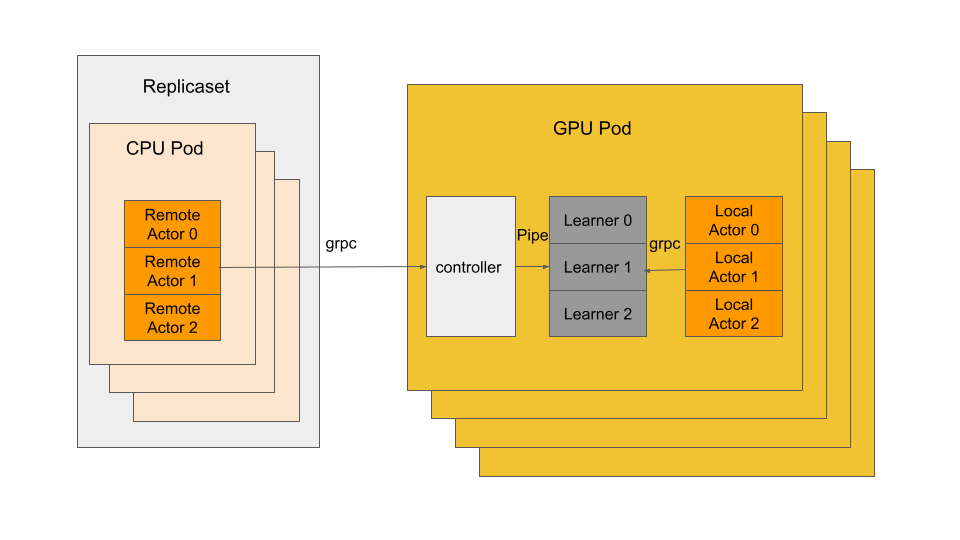

In this section, we introduce the overview of distributed RL in PFN. In PFN, we manage our computation resources with Kubernetes, hence, we built our distributed RL environment on Kubernetes and Docker. The following is an overview of our distributed RL system:

In our system, there are three different kinds of processes:

- Actors run on a CPU, generate experiences from the given environment and model. There are two types of actors: the local actors, which locate on the same pod as the learners and connect with learners via pipes; remote actors, which locate on seperate CPU pods and connect with learners/controllers via grpc.

- Learners collect the experience from actors and update the model from the experience. In case of distributed data parallel learning, all the learners connected through torch DistributedDataParallel.

- Controllers serve as the gateway of each GPU pod for remote actors to connect to. controllers also help to balance the workload when there are multiple learners on the same GPU pod.

Application performance evaluation case study

Molecular graph generation task

Designing molecular structure that has desired properties is a critical task in drug discovery and material science. RL is an important approach to the problem since there are several tough challenges in the application: the search space (i.e. the total number of valid molecular graphs) is huge (estimated to be more than 10^23 by You [2]) and discrete. One of the prior work is “Graph Convolutional Policy Network for Goal-Directed Molecular Graph Generation” [2] , which applies PPO algorithm to the molecular graph generation task.

We implement their algorithm on PFRL. In addition, we extend their work to a distributed environment with gRPC and Kubernetes and test the scalability of our implementation. During the implementation, we also refer to work [3] and [4].

Experiment

In this section, we conduct a set of experiments to show the effectiveness of actor parallelism. We run the experiments on our internal cluster MN-2 (Learner jobs) and MN-3 (remote actor jobs) with the specifications shown in the following Tables

Specification of MN-2

| cluster | MN-2 |

| CPU | Intel(R) Xeon(R) Gold 6254 CPU x2@ 3.10GHz 36 cores |

| Memory | 384G |

| GPU | NVIDIA Tesla V100 SXM2 x 8 |

| Network | 100GbE x 4 |

Specification of MN-3

| cluster | MN-3 |

| CPU | Intel(R) Xeon(R) Platinum 8260M CPU x2@ 2.40GHz 48 cores |

| Memory | 384G |

| Network | 100GbE x 4 |

We modify the original implementation to support the distributed learning, and we run all the experiments using exactly the same parameters of the original application. We confirm that all experiments achieve good accuracy compared to the original implementation within an acceptable error range.

Scalability results

scaling out the actors

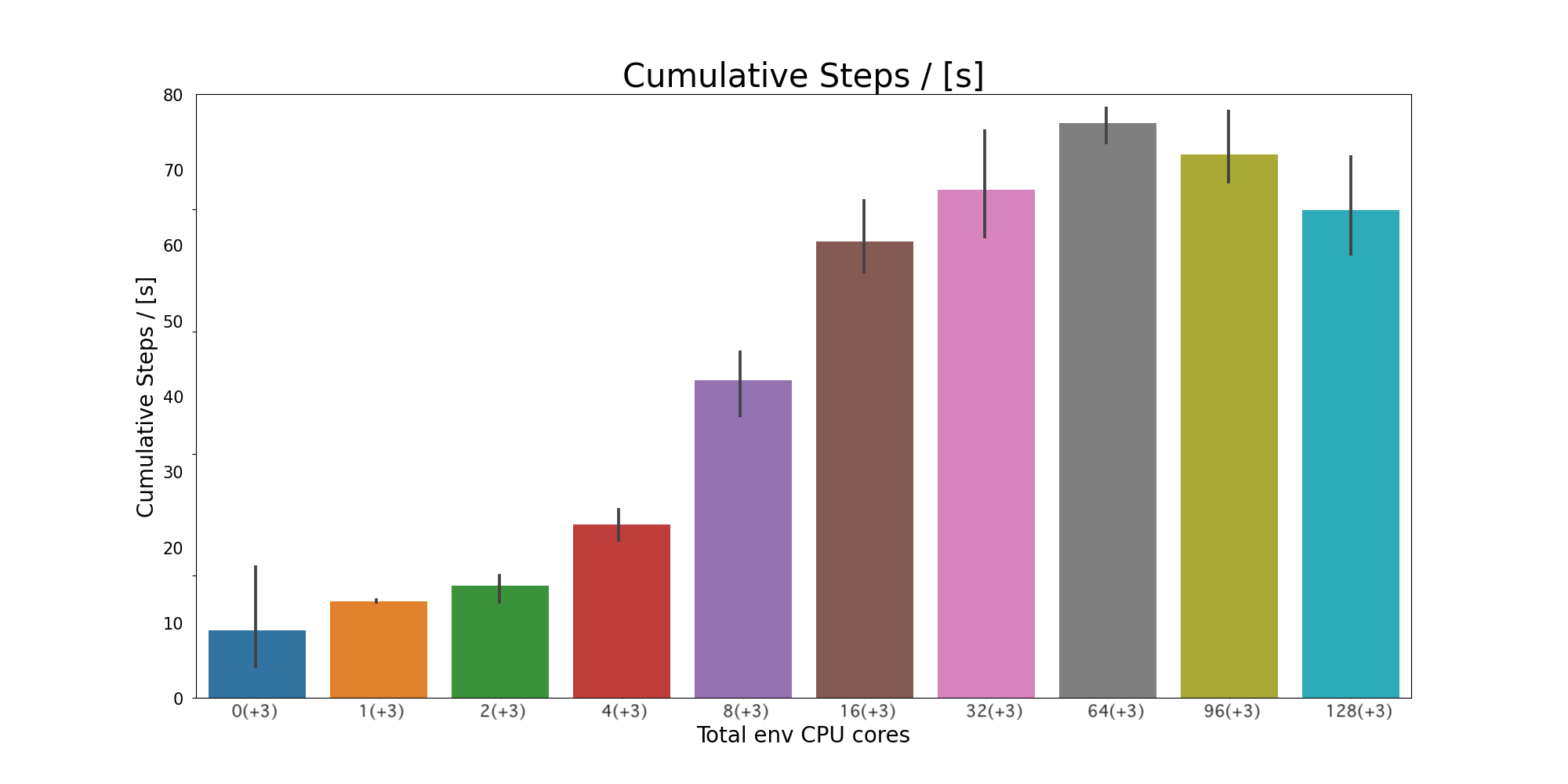

In the experiment, we demonstrate the effectiveness of actor parallelism. We show how adding more actors can increase the generation and processing of the experience. As we stated in the previous section, our application implements PPO, which is an on-policy algorithm. Thus, actors cannot run in parallel with learners. In other words, the learner has to wait for actors to generate necessary experiences and fill the queue and the actors have to wait until the learner runs the training process and updates the model to ensure that all experiences are generated from the latest model. However, adding more actors can help to speed up filling the experience queue after each model update, and therefore, reduce the interval between updates and shorten the overall run time. In addition, in order to find out the best balance between the CPU and GPU counts, we run a learner on a single GPU, and scale-out the number of actors. We assign each actor to occupy a CPU core, when the number of actors exceeds the CPU count on a single node, we move additional actors to remote nodes as remote actors.

scalability results of increasing the number of actors (CPU cores) with a single learner (CPU). Cumulative Steps/[s] shows the number of experiences processed by learner per second

scalability results of increasing the number of actors (CPU cores) with a single learner (CPU). Cumulative Steps/[s] shows the number of experiences processed by learner per second

The figure above shows the performance and scaling of the implementation. The Y-axis shows “Cumulative steps per second”, where the higher is better, and the X-axis shows the number of CPU cores. We have confirmed that the parallel efficiencies are great from “3” to “19”, and it goes down after that. In addition, from the figure, we can see that the “cumulative steps per second” decreases after “67”. Since adding remote actors only shortens the experience-generation time between the learner’s training steps. According to Amdahl’s law, adding even more actors can not shorten the overall time beyond the training, which is not parallelized. Furthermore, more runtime overhead can be added by higher parallelism.

Conclusion

We described PFN’s effort of scaling out RL applications. We combined our RL framework, PFRL and gRPC communication technology, and scaled RL application where actor (or env) simulators are relatively computationally heavy. We showed a case-study of an on-policy PPO for molecular graph generation application.

References:

- [1] Y. Fujita, K. Uenishi, A. Ummadisingu, P. Nagarajan, S. Masuda, and M. Y. Castro, “Distributed Reinforcement Learning of Targeted Grasping with Active Vision for Mobile Manipulators,” IROS, 2020. Link

- [2] J. You, B. Liu, R. Ying, V. Pande, J. Leskovec, “Graph Convolutional Policy Network for Goal-Directed Molecular Graph Generation” NIPS, 2018. Link

- [3] E.Wijmans, A. Kadian, A. Morcos, S. Lee, I. Essa, D. Parikh, M. Savva, D. Batra, “DD-PPO: Learning Near-Perfect PointGoal Navigators from 2.5 Billion Frames” Link

- [4] “Massively Large-Scale Distributed Reinforcement Learning with Menger”

- [5] Introducing PFRL: A PyTorch-based Deep RL Library | by Prabhat Nagarajan | PyTorch

- [6] D. Kalashnikov , A. Irpan , P. Pastor , J. Ibarz , A. Herzog , E. Jang , D. Quillen , E. Holly , M. Kalakrishnan, V. Vanhoucke, S. Levine, “QT-Opt: Scalable Deep Reinforcement Learning for Vision-Based Robotic Manipulation”, CoRL 2018. Link